August 15, 2023

A Values-Driven Generative AI Policy for PHOS

2023 will go down in history as an inflection point for all things AI. Globally, every industry is becoming increasingly disrupted by new forms of artificial intelligence.

Generative AI is a category of AI models that can generate new data, such as images, artwork, design, text/writing, music, audio, video, and animation. As a creative agency that regularly crafts these deliverables for clients, we’ve been asking some new questions at PHOS:

- How will we approach this massive industry disruption?

- Should we completely reject the technology?

- Should we go full send into adoption?

- Is there a middle ground, and if so, where is the line drawn?

- What will drive our decisions?

At PHOS, we don’t care to have a reputation of being a first adopter, but we definitely don’t want to be the last. Instead, we’d rather our decisions for adopting and implementing any new platform or technology be driven by intentional conversations, shared leadership, forward-thinking, and collaborative decision-making.

The Ethics of Generative AI

To jumpstart PHOS’ exploration of generative AI, we invited Michael Sacasas, Executive Director of the Christian Study Center of Gainesville, to speak to our team. In February, Michael gave a presentation entitled “The Search for Meaning: Evaluating the Promise and Peril of Generative AI.”

When assessing new technologies, Mike challenged us to ask ourselves ethical questions I have rarely considered1:

- What sort of person will the use of this technology make of me?

- What habits will the use of this technology instill?

- How will the use of this technology affect how I relate to the world around me?

- Does this technology automate or outsource labor or responsibilities that are morally essential?

- What assumptions about the world does the use of this technology tacitly encourage?

- What are the potential harms to myself, others, or the world that might result from my use of this technology?

- What would the world be like if everyone used this technology exactly as I use it?

As we worked together as a team to answer these questions and pursue the creation of a policy surrounding generative AI, we hosted a team-wide discussion to answer two critical questions:

- What do we value (in people, work, humanity, process, the world, etc.) that would shape our view and adoption of new technologies (generative AI or otherwise)?

- What are some acceptable, encouraged uses of generative AI based on those values?

We discussed our own core values of leadership, creativity, love, integrity, and community and the pro and cons that support and threaten both our partnerships with clients and our own culture. Outside of our core values, the list of things that the team created that shape our adoption or rejection of new technologies was compelling:

| Transparency | Security and Safety |

| Human-centric experiences | Human flourishing |

| Emotional connection | Originality |

| Personalization | Authenticity |

| Excellence | Trust |

| Brand (client) voice | Empathy and sympathy |

| Accuracy | Innovation |

| Efficiency |

Our core values, met with these team values, create the guide posts for our AI policies at PHOS.

Where We Stand on Generative AI

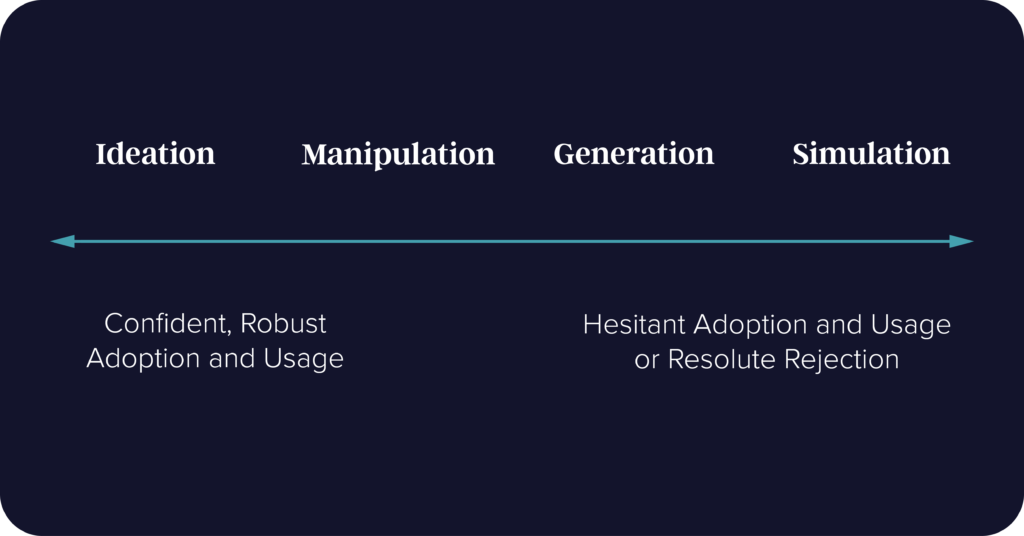

Based on these convictions, what we know about generative AI, the current capabilities of available technologies, and our test cases, PHOS has defined a values-driven approach to generative AI based on four categorical uses:

- Ideation

- Manipulation

- Generation

- Simulation

These categories clarify our team’s confident adoption or resolute rejection of the various uses of generative technologies. Because there are some unavoidable gray areas of certainty and the endlessly evolving nature of these technologies, it’s best for us to plot these four uses on a spectrum.

Creative Ideation (Confident Adoption and Usage)

We believe in the beauty and power of collaboration. We love using people and tools to generate “what-ifs” that spiral into better and better ideas. While we value the role generative AI can play in ideation, we question whether AI can ever actually create a novel idea if it is only analyzing patterns and making predictions based on existing data. Epistemologically, though, isn’t all ideation sourcing ideas from previously existing data set?

While caution is held with the results collected, PHOS has confidently and gladly adopted generative AI to aid our ideation processes. We are creating better work for our clients with this technological integration.

Acceptable uses: nearly endless; keyword ideas, content ideas, visual content ideas (iconography, infographics), email subject lines, promotion ideas, customer retention strategies, trend identification, seasonal promotions, social media hashtags, FAQ identification

Non-acceptable uses: blind acceptance and implementation of ideas generated by AI, replacement/avoidance of collaboration or brainstorming

Data Manipulation (Reasonable Adoption and Usage)

From an efficiency standpoint, this is where generative AI has proven the most useful, ethically sound, and values-aligned for PHOS. We actively use generative AI tools to improve our efficiency, productivity, results, and accuracy.

Still, we do not want to assume that all data returned from data manipulation prompts are always accurate. Any team member using AI-assisted data is responsible for its accuracy, fact-checking, and legal usage.

Acceptable uses: code processing, grammar editing, copyediting and revisions, voice redefinition, copy formatting, function formulation, synthesis (e.g., data), summarization (e.g., meetings), sentiment analysis, speech-to-text transcription, image manipulation (enhance/tweak), audio/video editing

Non-acceptable uses: carte blanche, unedited use of returned data, language translation, image manipulation (misrepresentation, deception, copyright violation, artistic disregard)

Content Generation (Hesitant Adoption and Usage)

Our team has referred to content created by generative AI as “marketing junk food” and “the stock photography of words.” We value originality, humanity, art, and a creative process that ensures that work intended to serve humans is created by humans. As a result, we are extremely cautious here and slow to leverage generative AI to write any significant amount of content for ourselves or client work. Behind-the-scenes work, procedural work, legal work, and the things that are not meant to carry and convey brand voice, purpose, and empathy are acceptable channels for leveraging AI to generate content.

As Michael Sacasas pointed out to us, when AI is wrong, it is “confidently wrong.” It will be entirely sure of its completely inaccurate response. This is dangerous and could produce zettabytes full of misinformation.

The writers’ strike in Hollywood points to another potential threat of AI for content generation: the artist is no longer needed. This, too, is antithetical to our values, mission, and organizational strategy.

For PHOS, in the process of content creation, AI does not substitute the need for a subject matter expert, editor, or creative director. These roles remain essential for ensuring the quality, accuracy, and creativity of our final product.

Acceptable uses: contractual language, process formulation, schema markup, code snippet generation, note-taking, competitor analysis, calls-to-action, headlines, metadata generation, closed captions, data analysis & reporting

Non-acceptable uses: unfiltered, blind acceptance of research-related queries, blog writing, image generation (graphics, logos, icons, layouts), music composition, video generation, story generation (e.g., buyer personas), design-to-code automation, client communication, social media captions

Real-Time Simulation (Apprehensive Adoption and Usage)

Tools that produce real-time or recorded voice simulations and deep fakes are dangerous. Their usage in sexual exploitation and sextortion is harmful to women and children around the world and, therefore, antithetical to PHOS’ mission, vision, and values.

Additionally, we believe that our values of authenticity, transparency, and human connection are nearly completely eroded by real-time generative AI that seeks to emulate real-time person-to-person interaction. At a minimum, chatbots ought to inform the user of the nature of their interactions and invite a warm, personal connection as an alternative option.

Acceptable uses: user-informed interactive simulation, real-time website conversion optimization, dynamic pricing strategies, content personalization, recorded (i.e., non-real-time) voice modeling with talent consent

Non-acceptable uses: real-time voice modeling pretending to be a past/present person (real-time speech emulation), deep fakes

The Future of Generative AI at PHOS

This is a compilation of our thoughts and is not meant to serve as a legal document. This policy simply represents our current perspectives and ideas.

As we have quoted many times at PHOS, innovative leadership, according to Gary Hamel, is “the ability to be entirely committed to a plan of action while entirely tentative should new information arise simultaneously.” Here is where we stand at present, but all that is subject to change as we grow, learn, mature, and evolve.

PHOS’ AI Learning Committee is keeping a close ear to the ground on new technologies, new AI regulations, and new opportunities to fuel our mission through artificial intelligence. In the end, we are a people-first company seeking to leverage the expertise and creativity of our people to serve other people all around the world with empathy and excellence. In as much as AI can help us do that, we’re all for it, and in any way that’s threatened, we’ll be the first to bow out.

1https://theconvivialsociety.substack.com/p/the-questions-concerning-technology